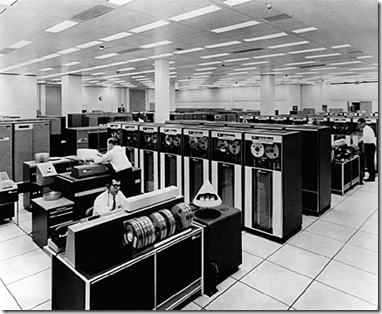

Maintenance and operation of these early computer systems was extremely complex and massive cables were required to connect all their components. Old computers also required an enormous amount of power and had to constantly be cooled to avoid overheating. The complexity of these huge, room-sized machines, required a lot of space and a controlled environment and maintaining these machines also led to the practice of secluding them in dedicated rooms; it was the birth of the datacenter.

From the 60s, computers stopped using vacuum tubes to gradually using transistors which made them more durable, small, efficient, reliable and inexpensive. The first transistorized computer (TRADIC) was developed in 1954 but the commercial models have emerged only a few years later with the first IBM systems.

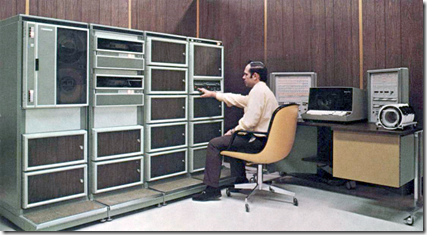

While mainframes were occupying large rooms and consuming huge resources, in the mid-1960s a new type of machines appeared. Smaller and cheaper than the mammoth mainframes, they were called minicomputers exactly by opposition to the gigantic dimensions and price of their predecessors. This type of computers eventually asserted itself, creating a class apart with its own architecture and operating systems.

In 1971 Intel launched the first commercial microprocessor, the 4004, which was a huge technological leap. This increase in the performance of all systems created the need to optimize the use of expensive mainframe hardware, separating it into smaller units in order to take full advantage of the still scarce computing resources.

This is how virtualization came to be, very basic in the beginning, but conceptually evolving very rapidly in order to allow the mainframe applications to perform several tasks concurrently. The first commercial use of virtualization was released in 1972 on the IBM VM/370 operating system.

Moreover, the emergence of the microprocessor soon makes the term "minicomputer" mean a machine that lies in the middle range of the computing spectrum, in between the smallest mainframe computers and the brand new microcomputers. These midrange machines grew to have relatively high processing capabilities and were used in industrial process control, telephone switching, laboratory equipment control and in the 1970s, they were the hardware platform that launched the computer-aided design (CAD) industry.

Throughout that decade, data centers began documenting formal disaster recovery plans alongside with the development of local networks that allow to interconnect multiple machines, increasingly powerful. But at the end of the decade, the advent of microcomputers becomes a viable alternative to outdated mainframes because they were smaller and cheaper. Being originally designed for scientific and engineering applications, microcomputers were quickly adapted for enterprise applications and the fact that they were easily air-cooled caused them to enter into the offices. This led to the almost total extinction of the once all-powerful mainframes and the now obsolete datacenters with complex cooling systems.

Previous Post – Next Post

2 comments:

Nice to see mainframe computers.

Mainframe Development

You have written an excellent blog.keep sharing your knowledge.

Linux Training in Chennai

Linux Online Courses

Linux Course in Chennai

Post a Comment